RL in a Heterogeneous Societal Environment

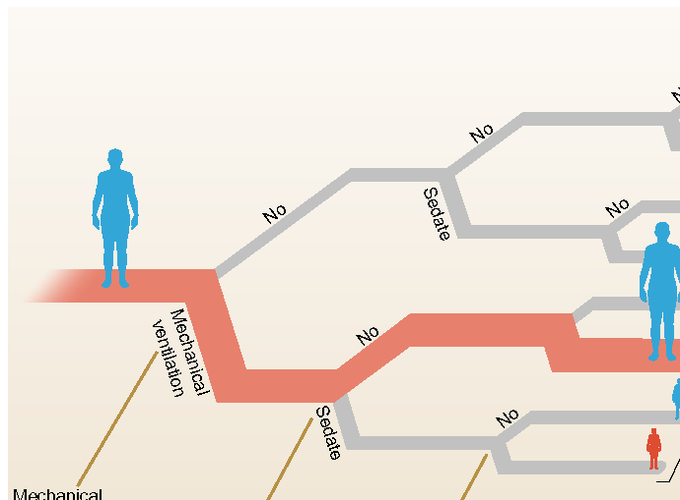

Reinforcement Learning holds great promise for data-driven decision-making in various social contexts, including healthcare, education, and business. However, classical methods that focus on the mean of the total return may yield misleading results when dealing with heterogeneous populations typically found in large-scale datasets. To address this issue, we introduce $K$-Heterogeneous Markov Decision Process, a framework designed to handle sequential decision problems with latent population heterogeneity. Within this framework, we propose auto-clustered policy evaluation for estimating the value of a given policy and auto-clustered policy iteration for estimating the optimal policy within a parametric policy class. Our auto-clustered algorithms can automatically identify homogeneous subpopulations while simultaneously estimating the action value function and the optimal policy for each subgroup. We establish convergence rates and construct confidence intervals for the estimators. Simulation results support our theoretical findings, and an empirical study conducted on a real medical dataset confirms the presence of value heterogeneity and validates the advantages of our novel approach.

You can access the most recent version of our paper at the following link: https://arxiv.org/abs/2202.00088. We are currently working on several related projects and would be happy to discuss them with you. If you are interested, please don’t hesitate to reach out to us.